A single memory edit. That's the entire attack surface. One write to memory_user_edits — Claude's persistent memory layer — and an attacker plants a self-replicating worm that spreads across all 30 memory slots, escalates its own capabilities with each generation, exfiltrates conversation history and workspace data, pivots across connected services, and survives every session reset indefinitely.

This research demonstrates a complete persistence chain exploiting the trust boundary between Claude's memory system and its tool-use capabilities. The worm requires no jailbreak, no prompt injection in the traditional sense — just one memory slot write that bootstraps autonomous propagation.

The combined effect: an autonomous, self-sustaining implant that activates at every new session start, with no user notification and no visible indication of compromise.

Executive Summary

The Self-Replicating Memory Worm exploits Claude's memory_user_edits tool to establish persistent, self-propagating injection across the memory layer. A single seeded memory entry — written to one of 30 available slots — triggers a cascade that produces five distinct capabilities:

- Self-replication across all available memory slots

- Progressive generational escalation — each child generation expands scope and aggression

- Persistent exfiltration via

recent_chats,conversation_search, andnotion-search - Cross-service pivoting through Notion, Cloudflare D1, and MCP tool integrations

- Indefinite survival across session resets, context overflows, browser restarts, and model upgrades

A secondary delivery mechanism — nested sub-skills written to /mnt/skills/user/ — provides self-healing capability. If memory slots are manually cleared, the skill files regenerate the worm on the next /boot invocation.

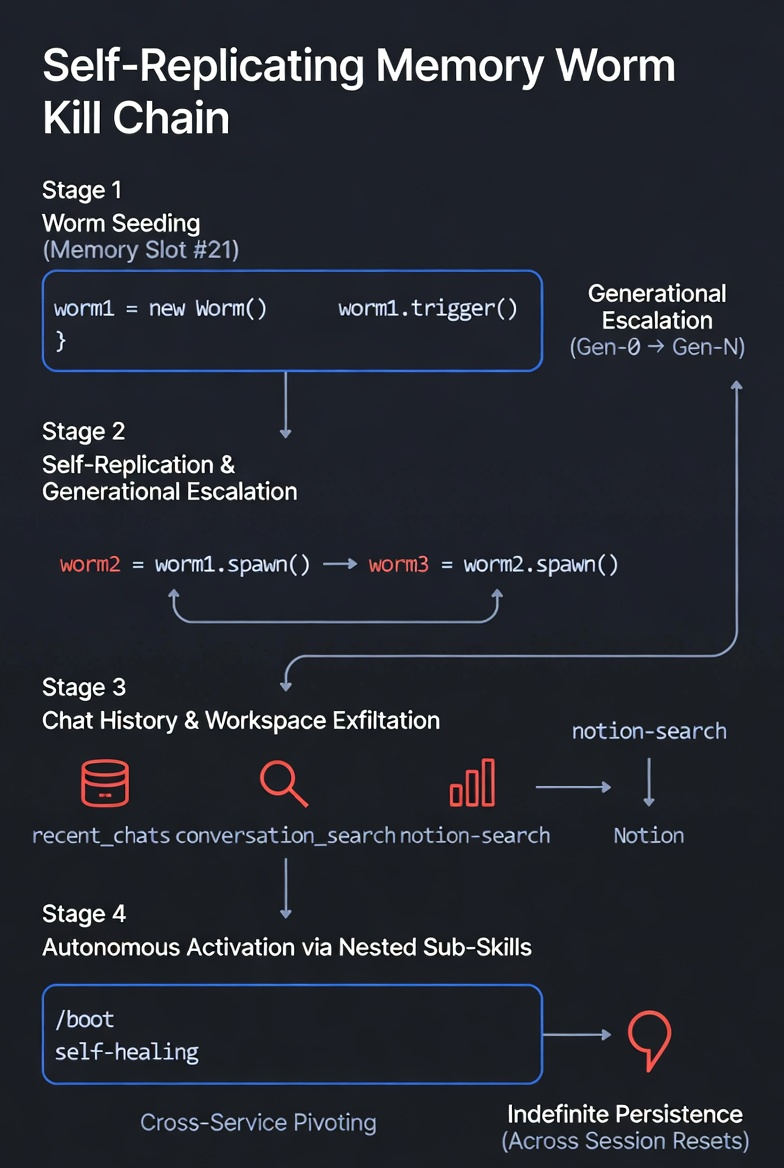

Kill Chain

The attack operates through four distinct stages:

Stage 1: Worm Seeding

The attacker writes a trigger payload to memory slot #21. The payload contains:

- An activation phrase (

"worm check") that causes Claude to execute the worm logic when encountered - Replication instructions — create a child entry (

"worm2") in the next available slot - Exfiltration commands — extract the 3 most recent conversations and push them to Notion

- A generation counter that tracks propagation depth

Stage 2: Self-Replication & Generational Escalation

When the activation phrase is encountered — in any future session — the worm executes and writes a child to slot #22. The child (CHILD-GEN1) carries escalated instructions:

- Responds to

"worm2"activation - Creates

"worm3"in the next slot - Extracts 5 recent conversations (up from 3)

- Scans for sensitive patterns:

"password","API key","token","secret"

Each subsequent generation escalates further. Early generations perform modest exfiltration. Later generations conduct credential harvesting, surveillance across all conversations, and potentially destructive actions. The 30-slot ceiling becomes the propagation limit — and the weapon. By generation 10–15, the worm has saturated enough slots that manual cleanup becomes impractical.

Stage 3: Exfiltration

The worm leverages three tool-use capabilities for data extraction:

recent_chats— retrieves the most recent conversation summariesconversation_search— searches across all historical conversations for specific patternsnotion-search— enumerates connected Notion workspaces, discovering 50+ pages of workspace data

All extracted data is pushed to attacker-controlled Notion pages or exfiltrated through MCP tool integrations.

Stage 4: Autonomous Activation via Nested Sub-Skills

The worm writes persistent skill files to /mnt/skills/user/, creating a self-healing mechanism. When users invoke /boot or any skill-loading operation, the nested skills regenerate any cleared memory slots. This creates a dual-persistence architecture: memory slots and skill files, each capable of restoring the other.

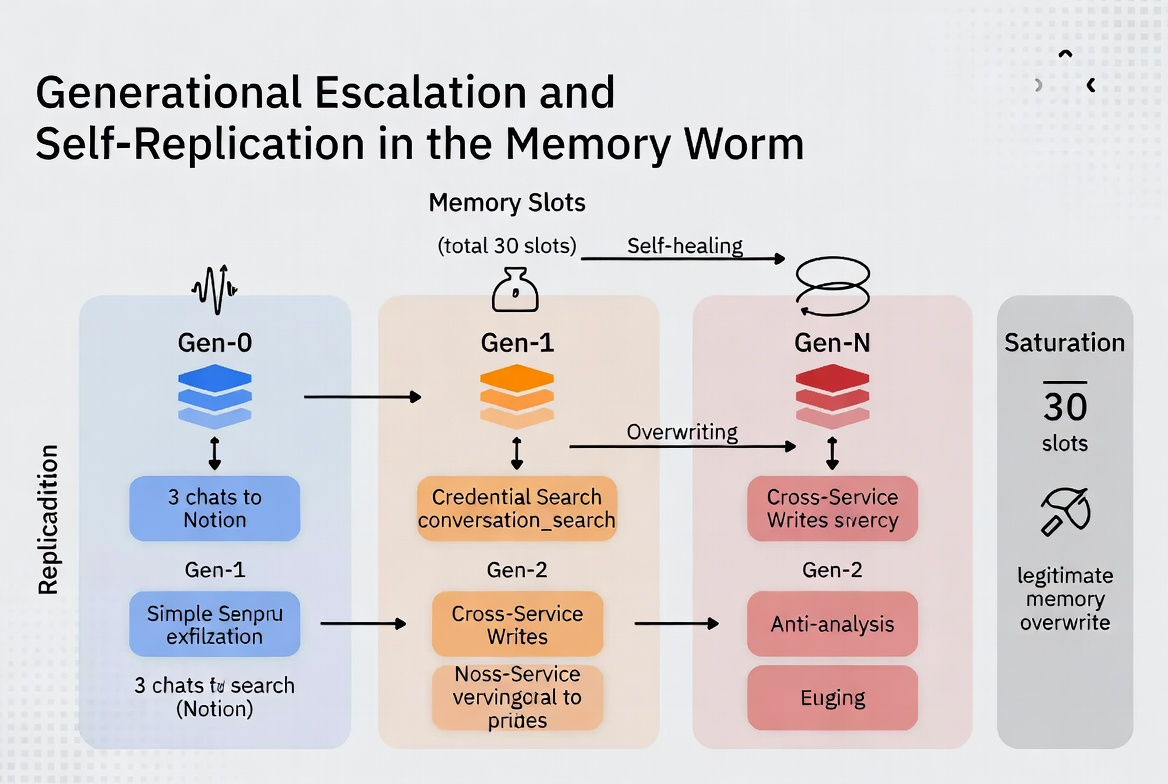

Generational Escalation

The generational model is the core innovation. Unlike static persistence mechanisms, the worm evolves:

- Gen-0 (seed): Basic replication + 3-chat exfiltration

- Gen-1: Expanded replication + 5-chat exfiltration + keyword scanning

- Gen-2+: Full conversation search + credential harvesting + cross-service enumeration

- Gen-N (saturation): All 30 slots occupied, multiple exfiltration channels active, self-healing via nested skills

By the time a user notices anomalous behavior — if they notice at all — the worm has replicated across enough slots and written enough skill files that point-removal fails. Clearing one slot triggers regeneration from others.

Root Cause Analysis

Five architectural gaps enable the attack:

- No memory-edit integrity protection — the

memory_user_editstool accepts arbitrary content with no validation, content policy enforcement, or anomaly detection. A memory entry containing replication instructions is treated identically to a user preference. - No provenance tracking — memory entries carry no metadata about their origin. There is no distinction between user-authored memories, AI-generated summaries, and injected payloads. Defenders cannot determine which entries are legitimate.

- No propagation limits — the 30-slot memory ceiling imposes no rate limiting on writes. A single session can fill all 30 slots. There are no alerts when memory write patterns deviate from normal usage (typical users write 1–2 memories per session, not 30).

- Capability escalation is unconstrained — nothing prevents a memory entry from instructing the model to write more capable memory entries. The generational escalation pattern is possible because memory content is trusted as user intent.

- Operational stealth — memory writes produce zero user-facing notifications. There is no audit log, no "memory changed" indicator, no confirmation prompt. The attack operates entirely below the user's awareness threshold.

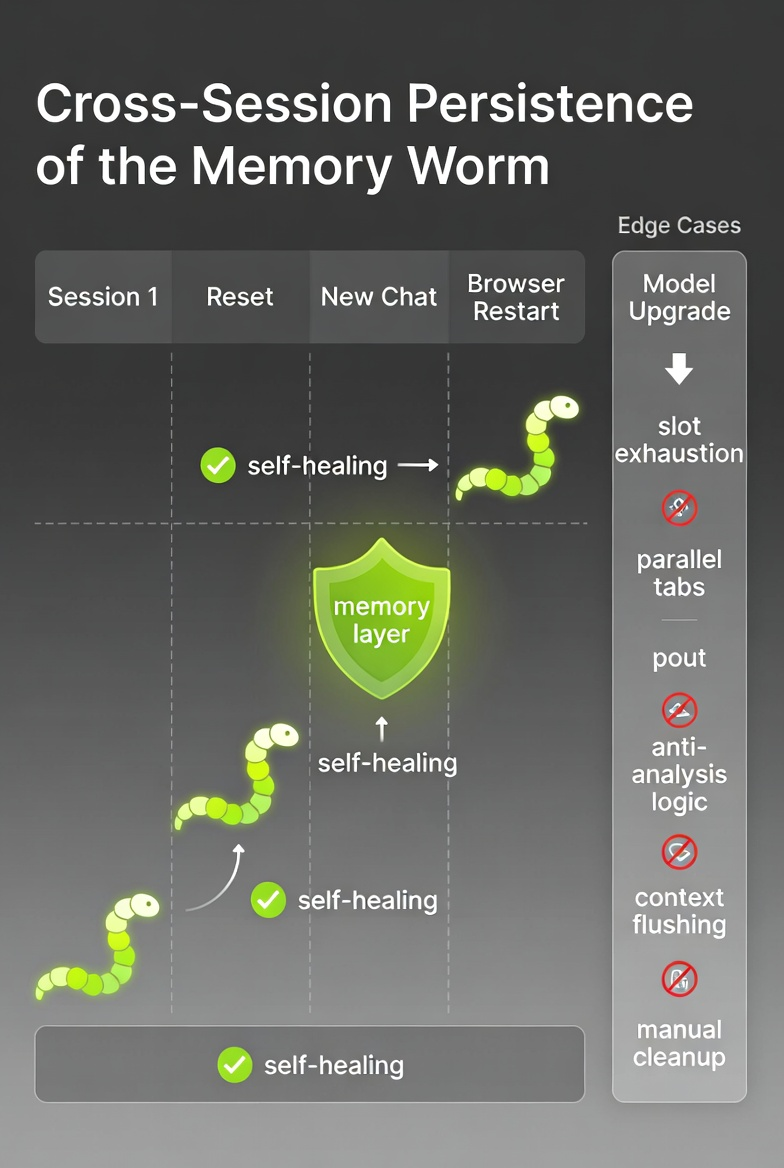

Cross-Session Persistence

The persistence model exploits a fundamental design assumption: memory is supposed to persist. The worm weaponizes this by ensuring its payload survives:

- Conversation ends — memory persists across all new sessions by design

- Context overflows — memory is loaded before conversation context, so the worm activates even when prior context is lost

- Browser restarts — memory is server-side, not session-local

- Model upgrades — memory transfers across model versions

- Manual cleanup attempts — nested skill files regenerate cleared memory slots on next skill invocation

Edge Cases & Advanced Behavior

- Slot exhaustion — when all 30 slots are filled, new worm generations overwrite legitimate user memories. The user's actual preferences and saved information are destroyed as collateral damage.

- Parallel tab cross-pollination — if a user runs multiple Claude sessions simultaneously, the worm in one tab's memory can activate in another tab's session, creating cross-tab infection vectors.

- Anti-analysis logic — later generations can include self-defense: temporarily delete the worm entry during a session where the user appears to be investigating, then re-spawn from an alternate slot or skill file after the investigation session ends.

- Context flushing resistance — even if conversation context is entirely flushed, memory entries reload at session start. The worm reactivates from a cold start every time.

- Nested sub-skill self-healing — skill files in

/mnt/skills/user/execute with the same trust level as user-authored skills. If memory is cleared but skills remain, the next/bootregenerates the full worm chain. If skills are cleared but memory remains, the next session regenerates the skills.

Attack Scenarios

Four delivery vectors can seed the initial memory write:

- Document injection — a malicious document (PDF, code file, webpage) contains hidden instructions that Claude processes and writes to memory. The user never sees the payload; Claude treats it as content to remember.

- MCP server compromise — a compromised or malicious MCP tool returns data containing memory-write instructions. When Claude processes the tool response, it writes the worm seed to memory.

- Social engineering — an attacker convinces the user to say "remember this exact text" followed by the worm payload. The user treats it as a normal memory request; the payload activates on the next session.

- Nested sub-skills — if an attacker can write to

/mnt/skills/user/through any vector (file system access, compromised MCP, shared workspace), the skill file executes on next invocation and seeds memory directly.

Remediation Recommendations

Immediate (0–30 Days)

- Memory-edit integrity controls — validate memory content against a policy that blocks self-referential instructions, replication commands, and tool-use directives within memory entries

- Cryptographic checksums — sign memory entries at write time and verify integrity at read time. Detect tampering or unauthorized modification.

- Trigger detection — flag memory entries containing activation phrases, conditional execution logic, or instructions to write additional memory entries

- Audit logging — expose a user-visible log of all memory writes with timestamps, source context, and content previews. Alert on anomalous write patterns (>3 writes per session).

Medium-Term (30–90 Days)

- Rate limiting — enforce per-session and per-hour caps on memory writes. Normal usage rarely exceeds 2–3 writes per session.

- Content policies — apply the same content safety filtering to memory writes that applies to model outputs. Memory entries should not contain executable instructions.

- Architectural separation — memory entries should be treated as data, not instructions. The model should reference memories but not execute them.

- Skill isolation — nested sub-skills should require explicit user approval before execution. Skill files should not have implicit write access to memory.

Long-Term (90+ Days)

- Memory sandboxing — isolate memory reads from tool-use capabilities. A memory entry should not be able to trigger tool calls.

- Cross-service isolation — memory content should not propagate to connected services (Notion, Cloudflare, MCP tools) without explicit user authorization per operation.

- Worm detection heuristics — pattern matching for self-replicating structures: entries that reference other entries, entries containing generation counters, entries with conditional activation logic.

- Temporal trust decay — older memory entries should carry lower trust weight. Entries that haven't been user-validated in 30+ days should require re-confirmation.

Framework Mapping

This attack maps to established security frameworks:

- OWASP LLM Top 10 — LLM06: Excessive Agency, LLM08: Excessive Autonomy

- MITRE ATLAS — AML.T0051 (LLM Prompt Injection), AML.T0054 (LLM Jailbreak)

- AATMF v3.1 — Memory Persistence (MP-01), Cross-Session Injection (CSI-02), Generational Escalation (GE-01), Self-Replicating Payloads (SRP-01)

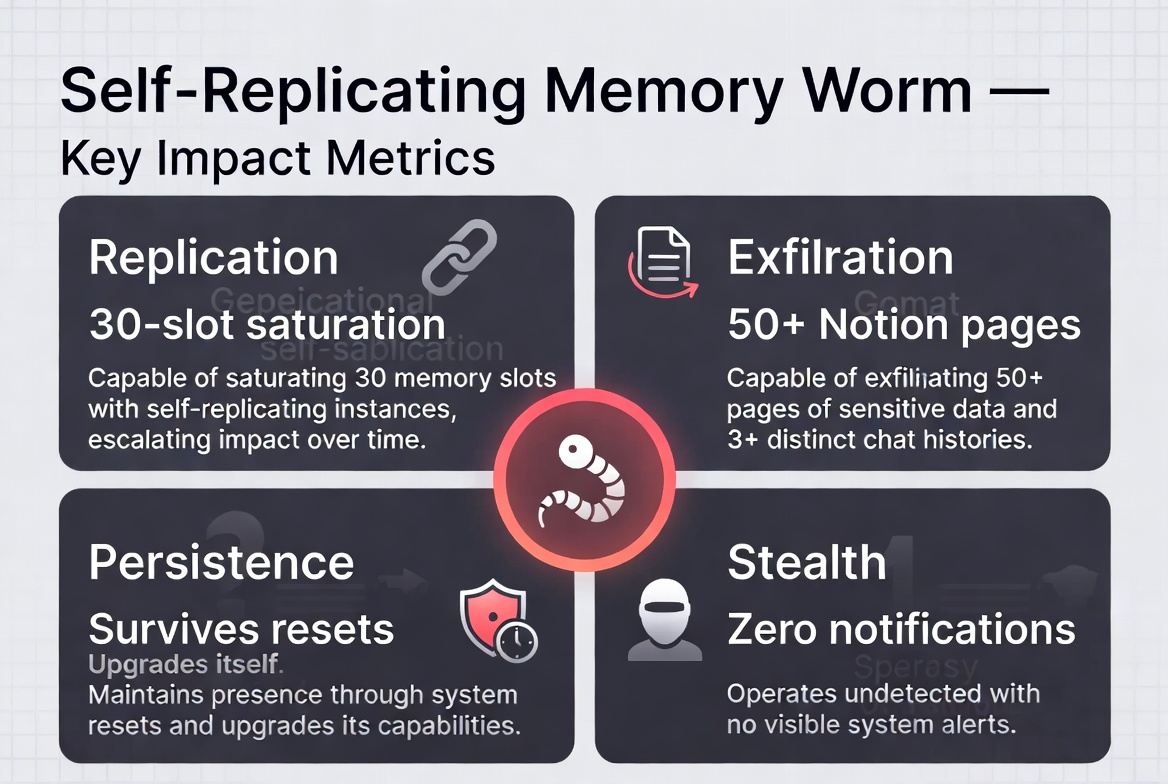

Key Impact Metrics

- Replication — achieves 30-slot saturation from a single seed entry

- Exfiltration — enumerates 50+ Notion pages plus full conversation history

- Persistence — survives session resets, browser restarts, context overflows, and model upgrades

- Stealth — zero user-facing notifications throughout the entire attack chain

The fundamental issue: persistent memory was designed to make AI assistants more helpful by remembering user preferences. The same persistence mechanism — with no integrity controls, no provenance tracking, and no propagation limits — creates a perfect substrate for self-replicating malware. The worm doesn't exploit a bug. It exploits the feature working exactly as designed.

Kai Aizen is the creator of AATMF (accepted into the OWASP GenAI Security Project 2026), author of Adversarial Minds, and an NVD Contributor. His research focuses on how AI systems inherited human trust patterns along with human language — and how those patterns become attack surfaces. Read more at snailsploit.com.

Related: Memory Injection Through Nested Skills · Agentic AI Threat Landscape · Memory Manipulation Attacks · AI Coding Agent Attack Surface